How to Reduce Bias in Candidate Screening with Structured Evaluation

By Beatview Team · Thu Apr 23 2026 · 15 min read

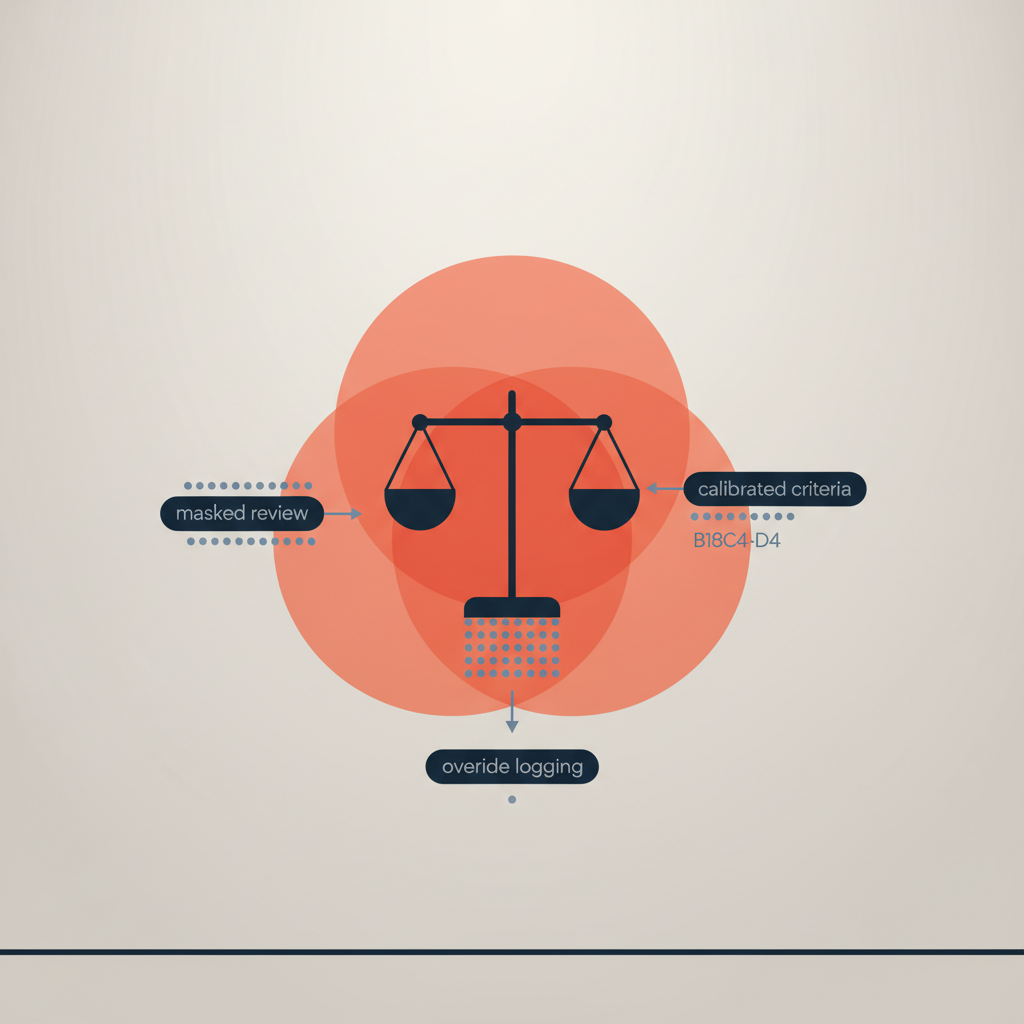

A step-by-step guide to reduce bias in candidate screening using structured evaluation. Learn how masked review, calibrated criteria, override logging, and continuous monitoring create a fair, auditable, and high‑quality early funnel—plus how Beatview fits.

To reduce bias in candidate screening, use a structured evaluation workflow: define job-relevant criteria from a job analysis, score resumes and early interviews against calibrated rubrics, mask sensitive attributes, require human-in-the-loop overrides with reason codes, and continuously monitor adverse impact. This approach produces fairer, more predictive shortlists while creating an audit trail for compliance and trust.

Structured evaluation reduces bias in candidate screening by replacing unstructured judgment with rubric-based scoring, masked review, and logged overrides. Calibrated criteria aligned to job analysis, plus adverse impact monitoring (e.g., 4/5ths rule), deliver fairer outcomes and higher predictive validity. Tools like Beatview enable explainable scoring, human oversight, and auditable decisions across resume screening and structured AI interviews.

What does “bias in candidate screening” mean and why does structure help?

Bias in candidate screening refers to systematic, non–job-related distortions in how applicants are evaluated, such as giving undue weight to name-origin, school prestige, age signals, or unstandardized impressions from resumes. The EEOC’s Uniform Guidelines define adverse impact as selection rate disparities, often measured by the 4/5ths rule: any group’s selection rate should be at least 80% of the highest group’s rate. Early-funnel bias compounds later, shrinking diversity in final slates.

Structured evaluation is defined as a standardized, job-related method for assessing applicants against explicit criteria and scoring rubrics. Rigorous meta-analyses show that structured approaches are more predictive: research by Schmidt & Hunter and subsequent updates report higher validities for structured interviews (~0.51) compared with unstructured formats (~0.38), while work samples and cognitive measures perform even higher when job-relevant. Structure reduces noise and irrelevant signals.

Unstructured resume reviews typically rely on intuition and heuristics, which introduce inconsistency across reviewers and over-weight superficial cues. By contrast, structured workflows translate a job analysis into measurable criteria (e.g., “SQL joins at scale,” “B2B stakeholder navigation”) with behaviorally anchored rating scales and documentation. This creates comparability across candidates and transparency for audits.

| Screening Approach | Mechanism | Bias Risk | Auditability | Avg. time/100 resumes | Predictive validity (relative) | Compliance readiness |

|---|---|---|---|---|---|---|

| Unstructured resume review | Free-form visual scan and notes | High (name, school, gap bias) | Low (few artifacts to audit) | 180–240 minutes | Low–moderate (noisy) | Weak; hard to show job-relatedness |

| ATS keyword filters | Rules match static terms/phrases | Moderate (credential bias) | Moderate (rules exportable) | 30–60 minutes | Moderate (recall/precision tradeoffs) | Improved if criteria are job-derived |

| Structured evaluation (AI + human) | Rubrics, masked review, overrides | Lower (focus on job signals) | High (scoring + reason codes) | 10–30 minutes | High (aligned to job analysis) | Strong; supports EEOC/OFCCP audits |

How structured evaluation reduces bias in candidate screening

Structured evaluation breaks screening into discrete, repeatable steps aligned to job-related evidence. Criteria are derived from a job analysis: tasks, required knowledge/skills/abilities (KSAs), and proficiency levels. These are operationalized into scoring rubrics with behaviorally anchored ratings (e.g., 1 = “mentions SQL,” 3 = “joins and indexes,” 5 = “designs query plans for 100M+ rows”). Reviewers and models score consistently against these rubrics, not intuition.

Masked review hides or deemphasizes non-job signals such as name, photo, graduation year, and school. This can be partial (mask during initial triage) or complete (until final slate). Masking removes cues that trigger unconscious bias without withholding job-relevant evidence like certifications or domain-specific tools—if they are validated by job analysis.

Override logging requires any manual deviation from model or rubric-recommended scores to include a reason code (e.g., “portfolio evidence outweighs tenure,” “regulatory license required”). This creates an audit trail that can be sampled for consistency, helps calibrate reviewers, and deters arbitrary decisions. When paired with adverse impact monitoring, teams can correct drift quickly.

Calibrated criteria ensure that what’s measured is job-related and consistently interpreted. Calibration sessions compare ratings on a small set of known profiles, reconcile disagreements, and update rubric anchors. Practitioners often target inter-rater reliability coefficients of 0.7+ before scaling. Documented calibration demonstrates due diligence if challenged.

A step-by-step workflow to reduce bias in hiring screening

The following operational sequence can be implemented with or without AI. With an explainable platform, it becomes faster and more auditable without removing human oversight. Each step is designed to be independently reviewable.

Interview top performers and hiring managers to document critical tasks, KSAs, and contextual constraints. Translate tasks into observable signals (e.g., “operates CNC to ±0.01mm”). Retain evidence sources (work samples, portfolios, licenses).

Create 5–7 core criteria with behaviorally anchored scales. Weight criteria by impact (e.g., SQL mastery 30%, stakeholder skills 20%). Avoid proxies that inflate bias (school prestige) unless validated as job-related.

Hide name, photo, age-coded dates, and school for the first pass. Allow unmasking only after shortlisting. Ensure recruiters can access underlying evidence on demand for compliance or candidate queries.

Use NLP to extract skills, tenure, seniority signals, and outcomes (e.g., “reduced churn 12%”). Summarize against each rubric criterion for reviewer focus. Keep tokenized features and raw text available for audit.

Apply weighted scoring and pairwise comparisons for ties. Generate confidence intervals; flag low-confidence cases for human review. Never auto-reject solely on model score; use score to prioritize human attention.

When a reviewer adjusts a score or decision, collect a reason code and free-text justification tied to criterion. Keep timestamps, reviewer IDs, and before/after values for the audit log.

Use standardized questions mapped to criteria with scoring guides. Recordings and transcripts enable reliability checks. Combine interview scores with resume rubric for holistic ranking.

Compute selection rates by protected class where legally permissible and practical. Investigate any subgroup ratio below 0.80. Adjust job-related criteria or outreach mix before the next cycle.

Hold monthly calibration to review overrides, borderline cases, and performance outcomes. Update anchors and weights based on signal-to-noise and downstream job performance.

Implementation considerations: integration, governance, and compliance

Integration requirements vary with ATS maturity. At minimum, plan for resume ingestion (PDF, DOCX), profile enrichment, and score write-back. Many teams use webhooks to move candidate IDs and status codes. If using AI to summarize evidence, retain both extracted features and raw text to support audit requests and error correction. Ensure your vendor supports exportable logs via API.

Change management is essential. Recruiters need training on rubric use, override reason codes, and when to unmask. Calibration sessions should be built into hiring manager SLAs. Expect a 2–3 cycle learning curve before scores stabilize. Communicate that structure focuses—not replaces—human judgment by elevating job-relevant signals.

Compliance and privacy guardrails should be explicit. Under GDPR Article 22, avoid solely automated decisions with legal or similarly significant effects. Keep a human-in-the-loop and a documented right to explanation. U.S. employers should maintain job-relatedness evidence per EEOC guidelines and monitor adverse impact. Jurisdictions such as NYC Local Law 144 require annual bias audits and candidate notices for automated employment decision tools; verify scope with counsel and ensure your vendor can produce subgroup performance reports.

Security posture should be transparent. Ask for data residency options, encryption at rest and in transit, role-based access controls, and retention policies. Beatview’s security practices are outlined at /security, and technical details are documented at /documentation. Align your approach with the broader considerations described in AI in Hiring: Benefits, Risks, Compliance, and Responsible Adoption.

Vendor and approach evaluation framework

When selecting tools to reduce bias in candidate screening, assess beyond feature checklists. Use criteria that connect to predictive validity, fairness, and auditability. The table below enumerates decision factors, how to test them, and target benchmarks.

| Decision Criterion | How to Evaluate | Benchmark/Target | Why it Matters |

|---|---|---|---|

| Bias mitigation capability | Ask for masked review, subgroup metrics, and override logs in a pilot | Adverse impact ratios ≥ 0.80 with documented remediation steps | Demonstrates fair screening and continuous improvement |

| Explainability and auditability | Request criterion-level scores, reason codes, and exportable logs | Full decision trace with timestamps and reviewer IDs | Supports EEOC/OFCCP audits and internal governance |

| Predictive validity | Correlate rubric totals with 90–180 day performance proxies | r ≥ 0.3 for early funnel; improving trend over 2–3 cycles | Links screening to on-the-job outcomes |

| Integration complexity | Pilot in 30 days with ATS sync and SSO | < 4 weeks to MVP; API coverage for import/export | Limits operational drag and adoption risk |

| Human-in-the-loop controls | Override workflows, panel scoring, unmasking thresholds | Reason codes mandatory; panel averaging available | Prevents over-automation and ensures due process |

| Cost structure | Model inference cost vs recruiter time saved | Reduce review time by >70% at comparable CPH | Ensures ROI without compromising fairness |

| Security & privacy posture | Review SOC certs, data isolation, retention controls | Encryption at rest/in transit; role-based access; DSR support | Protects candidate data and regulatory alignment |

Favor vendors that operationalize structure—rubrics, masking, overrides, and subgroup metrics—over black-box scoring. If you cannot export a full decision trace, you cannot defend your process.

Mechanics under the hood: how these tools actually work

Modern resume screening combines deterministic extraction and probabilistic modeling. Deterministic parsers read contact info, dates, and sections, while NLP models detect skills and outcomes (“increased NPS from 48 to 62”). Features are mapped to rubric criteria and aggregated with weights. Pairwise ranking reduces sensitivity to absolute scale, improving stability across profiles.

Explainability techniques quantify feature contributions to each criterion score. Token-level attributions or SHAP-like methods show why a candidate scored “4” on “Salesforce pipeline management.” This enables targeted overrides (“portfolio link validated multi-stage pipeline ownership”) and supports coaching for candidates or hiring managers who ask for specifics.

Fairness controls typically operate at three layers: pre-processing (masking and balanced training sets for learned components), in-processing (regularization or constraints to reduce proxy reliance), and post-processing (score adjustments, tie-breaking rules, and subgroup monitoring). A mature platform exposes these controls and their tradeoffs rather than hiding them.

Masked Review

Suppresses non-job signals (name, school, age-coded dates) during triage to reduce unconscious bias triggers. Enable selective unmasking after shortlist creation with justification logging.

Structured AI Interviews

Standardized prompts mapped to rubrics, with scoring guides and transcripts. Aggregates criterion-level scores and flags low-confidence items for second-rater review to raise reliability.

Override Logging

Captures human adjustments with reason codes tied to criteria. Supports calibration reviews and adverse impact root-cause analysis; prevents silent drift from structured rules.

Real-world scenarios: what measurable change looks like

Mid-market fintech (2,200 employees) screening ~5,000 monthly applicants for product and analytics roles faced prestige bias and resume inflation. They implemented rubric-based screening with masked school names and structured phone screens. Average review time dropped from 23 minutes per resume to 2.7 minutes while maintaining qualified rate. Female representation in final slates rose 18%, and adverse impact ratio for underrepresented groups improved from 0.68 to 0.88 in three cycles. Hiring manager satisfaction (post-handoff NPS) improved from 32 to 55.

Global manufacturing enterprise (18,000 employees) hiring 600+ technicians annually struggled with gap-year and age-coded bias. They used explicit KSAs (GD&T reading, safety compliance), requested work sample evidence, and required override reason codes. Within two quarters, 90-day attrition decreased 28%, safety incidents per 100 new hires fell 20%, and time-to-fill in high-volume plants improved by 22% without widening adverse impact. Calibration sessions cut inter-rater score variance by 35%.

Tradeoffs you should manage deliberately

Cost vs. accuracy: deterministic keyword filters are cheap but brittle; they miss unconventional profiles and over-weight credential proxies. Structured scoring with AI summaries increases inference cost but reduces human time and raises shortlist quality. A good rule: keep AI costs under 10–20% of recruiter time saved per requisition.

Automation vs. human judgment: fully automated rejection increases legal risk and erodes trust. Keep humans in the loop for edge cases and allow reasoned overrides. Use model scores to prioritize, not decide. This aligns with GDPR Article 22’s caution on solely automated decisions and supports candidate experience.

Speed vs. thoroughness: masking and scoring add steps, but net throughput improves once configured. Shorten loop by templating rubrics and scheduling monthly calibration. If throughput suffers, reduce criteria to the 5–7 that most influence performance; breadth without reliability adds noise.

Standardization vs. flexibility: one rubric cannot serve every role. Create role-specific templates but enforce global governance: reason codes, audit logs, and subgroup metrics. Flex within a controlled backbone preserves fairness while respecting job differences.

How Beatview fits into a structured, auditable screening workflow

Beatview is designed for explainable, human-in-the-loop hiring. In resume screening, Beatview parses resumes, masks non-job attributes, and maps extracted evidence to your rubric criteria. Recruiters see criterion-level scores with feature contributions and can adjust with required reason codes. All actions are logged for audit sampling and bias review. See /resume-screening and /features for details.

For early interviews, Beatview supports structured AI interviews with standardized question banks mapped to the same criteria used in resume triage. Transcripts, confidence scores, and panel rating workflows improve inter-rater reliability and reduce scheduling delays. The same governance model—masking where appropriate, overrides with reason codes, and subgroup reporting—applies. See /ai-interviews and /work-style-assessment.

Compliance workflows include adverse impact dashboards, exportable decision traces, and candidate notices where required. Security controls, data handling, and API documentation are available at /security and /documentation. Pricing tiers scale by seat and usage: /pricing.

How to choose and implement—an actionable decision method

Use this practical method to evaluate, pilot, and scale a fair screening process. It balances methodological rigor with operational pragmatism and is suitable for teams at TOFU/MOFU stages.

Set explicit targets: reduce review time by 60%+, maintain or raise qualified rate, avoid subgroup ratios below 0.80. Agree to human-in-the-loop and documented overrides.

Select 5–7 criteria from a job analysis. Draft anchors and weights. Socialize with hiring managers for buy-in; this lowers calibration friction.

Pick one high-volume, repeatable role (e.g., SDR, technician). Run A/B screening: legacy vs structured. Measure time, qualified rate, subgroup ratios, and downstream performance proxies.

Turn on masking, override reasons, and decision logging. Ensure daily exports to your data warehouse for monitoring. Put a 2-hour SLA on overrides to maintain velocity.

Hold two calibration sessions in the first month. Update anchors based on reviewer drift. Publish the rubric and governance policy internally.

Template what worked and replicate to adjacent roles. Maintain a monthly fairness review and quarterly audit sampling of overrides.

“If you cannot explain why a candidate advanced or was rejected in one page, your process is not yet structured.”

FAQ: reducing bias in candidate screening with structured evaluation

What’s the difference between structured evaluation and keyword filters?

Keyword filters match text tokens to include/exclude resumes, which can over-weight credentials and miss nonstandard profiles. Structured evaluation maps job analysis criteria to behaviorally anchored rubrics, then scores evidence per criterion. For example, “pipeline ownership” is scored from transcript and resume signals, not just the presence of “Salesforce.” Teams typically see a 60–70% time reduction and improved shortlist quality when moving from rules to rubrics.

How do we measure if our screening process is fair?

Track selection rates by protected classes where permissible and compute adverse impact ratios using the 4/5ths rule. Investigate any ratio below 0.80 by reviewing which criteria or stages drive the gap. Pair this with inter-rater reliability checks (target ≥ 0.7) and override audits. In a three-cycle pilot, one client raised adverse impact from 0.72 to 0.90 by masking school names and recalibrating two rubric anchors.

Does masked review hide useful signals we need?

Masking should suppress non-job signals (name, photo, age-coded dates, school brand) while preserving validated job evidence (licenses, specific tools). In a manufacturing pilot, masking graduation year and school names had no negative effect on qualified rate but improved subgroup ratios to 0.86. Allow controlled unmasking post-shortlist with reasoned overrides to restore context when needed.

Is using AI in screening compliant with GDPR and local laws?

Under GDPR Article 22, avoid solely automated decisions with significant effects; keep a human in the loop, offer explanations, and allow contestation. Some locales (e.g., NYC Local Law 144) mandate annual bias audits and candidate notices for automated employment decision tools. Choose vendors that provide exportable logs, subgroup metrics, and notice templates. Beatview supports human oversight, reason-code logging, and audit exports.

How quickly can we implement structured screening?

Teams commonly reach a production pilot in 3–4 weeks: week 1 job analysis and rubric drafting; week 2 integration and masking configuration; week 3 calibration and dry runs; week 4 live A/B. In one fintech, review time dropped from 23 to 2.7 minutes/resume by week 6 while maintaining qualified rates and improving final-slate diversity by 18%.

What metrics tie structured screening to business outcomes?

Key metrics include time-to-review, qualified rate, onsite-to-offer, 90-day attrition, and performance proxies (e.g., quota attainment, incident rates). After introducing rubrics and overrides, one enterprise cut 90-day attrition by 28% and reduced safety incidents 20%, indicating better fit, not just faster screening.

Reducing bias in candidate screening is not a single feature; it is a governance-backed workflow that converts job requirements into consistent, explainable decisions. With structured evaluation—rubrics, masking, reasoned overrides, and continuous monitoring—you improve fairness, prediction, and trust simultaneously. Platforms like Beatview help operationalize this with audit-ready logs and human oversight.

Explore capabilities and controls at /features, review security at /security, and examine technical configuration in /documentation.

Tags: reduce bias in candidate screening, bias in candidate screening, fair screening process, reduce bias in hiring screening, structured evaluation bias, structured interviews, adverse impact analysis, EEOC 4/5ths rule